A conceptual framework for studying IoT: virtue, capabilities and care

Funda Ustek-Spilda | December 9, 2019

A brief look into the recent coverage of technology news demonstrates that technology reporting happens only at the extremes. The two future scenarios that we are portrayed with are the dark future of hyper-surveillance society à la Black Mirror. The ultimate connected future in which your fridge, kettle, toaster and [self-driving] car can all speak to one another to make your life fully integrated and seamless in the perfect smart city you live in. In this imaginary of two extremes, it is often portrayed that cases of data breaches, mass privacy violations or the implications of datafication for profiling, discrimination and exclusion turn the second scenario into the first one. It is rarely explored, however, how the connected future scenario itself might carry the characteristics associated with the first scenario, and where responsibilities might lie. Further, such a dichotomization presents a flickering search for responsibility, as neither the technologies (e.g. networks, sensors, hardware, software and so on), nor the humans developing them are thought to be [fully] accountable for the consequences.

How should we approach the scenario of two extremes? How is this imaginary for the future influencing the way in which technologies are built today? Where should we look for responsibility, and how should we approach the role of developers and innovators of new technologies? To answer these questions, the VIRT-EU consortium has been working to develop a conceptual framework through which we can study how developers of new and emergent technologies in the field of Internet of Things (IoT) across Europe are making ethically consequential decisions for connected technologies they develop. Based on our extensive research into the IoT technologies in Europe, we have developed a practical framework for ethics, which incorporates three different philosophical approaches: Virtue Ethics, Capability Approach, and Care Ethics.

In conceptualizing ethics as values in action with responsibilities for power, we draw upon the basic idea that ethics is a process of the application of values in human conduct, including but not limited to reasoning, design, communication, and knowledge-sharing, which guides understanding and decision-making in the development of new and emergent technologies. In practice, this entails sometimes complementary and occasionally competing values being expressed and enacted; and a position of power that developers, designers, and innovators of IoT technologies may find themselves in. As connected devices and services proliferate, data collection and algorithmic processing become increasingly black-boxed. Here, the onus of ethical decision-making about what data should be collected, how it should be processed, stored or shared as well as what kind of hardware should be deployed, given their economic, social and environmental implications, shifts further onto those developing those technologies and services. Technologies, however, are never developed in a vacuum. They are part of and embedded in the social contexts of their developers. This means the constraints and power relationships which the developers may find themselves in also come to be reflected in the technologies they build. Such a positioning entails that we include power relationships and constraints in our basic conceptualization of ethics, and permits us to engage with a range of different ways of thinking about ethics.

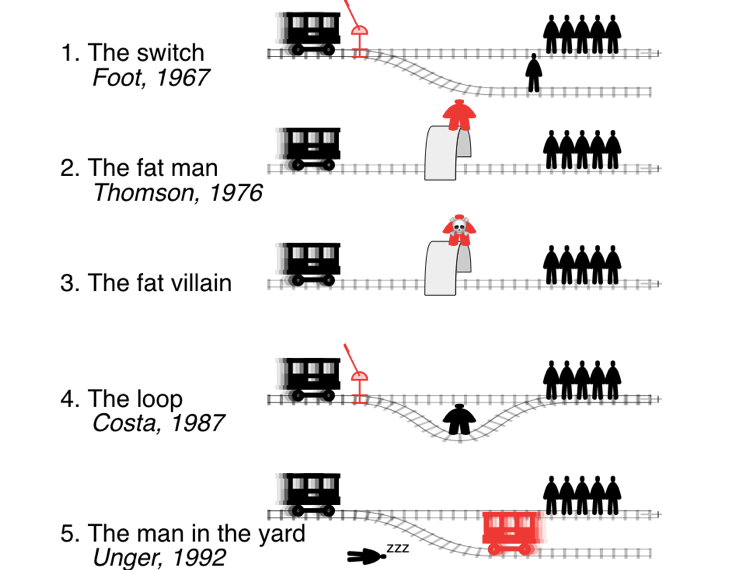

Much of the contemporary writing on ethics and technologies leverage two related approaches: consequentialist and utilitarian ethics. While consequentialist approaches generally focus on the consequences of new technologies for the groups that are impacted by them, the utilitarian approaches suggest an evaluation of possible alternatives, given their [potential] consequences for the different groups that might be affected, and advises the decision-maker to choose the one that leads to the “greatest good for the greatest number”. The prominence of thinking with these two ethical approaches is also echoed in our in-situ ethnographic investigations where conversations with IoT developers often veered toward efficiency, optimization, and cost-analysis when making an ethical decision. Indeed, we often see various examples of different versions of the age-old trolley problem discussed in media and with developers. The question is almost always simplified into an either/or (one person definitely needs to be killed) and the ethical justifiability of killing someone is rarely questioned. Part of the issue we take with these approaches is that they make the rational calculus intractable and often lead to significant reductionism of the issues at hand. This is why we propose to go beyond these and look for alternative approaches that we can both explain and account for how developers of IoT make ethical decisions, but also provide them with an alternative lens to approach the ethical challenges they face.

Our conceptual framework: Virtue Ethics, Capability Approach, and Care Ethics stem from our definition of ethics where both the constraints to making ethical decisions and the responsibilities for power are acknowledged as context-sensitive. Virtue ethics comes from the Aristotelean schools of thought where leading a virtuous life is considered the basis of leading a good life. These virtues, however, are not qualities one is born with but learned and acquired through continuous striving towards being good, and indeed, Aristotle counts the continuous struggle to turn acting in a virtuous way into the habit as a virtue on its own. While virtue ethics mainly focuses on an individual’s process of attempting to live a good life, the capability approach examines the ability to lead a good life, given the existing social contexts individuals are embedded in, which present them with a variety of opportunities, but also constraints. Care ethics brings both the Virtue Ethics and the Capability Approach together in that, it suggests taking into account the shifting obligations and responsibilities of individuals as they are positioned in a web of relations while examining their responsibilities for their decisions. By bringing these approaches together into a coherent framework, we are able to acknowledge that ethics as a process is not exclusively dependent on the principles and actions of the individuals, but acknowledges the inherent dialectic of life where conflicting demands, obligations and structural conditions can both limit and shape even the best intentions.

In our ongoing engagement with developers of IoT products as part of our ethnographical fieldwork, one question we ask is: Could this [device/software/platform, etc.] be made differently? The answer to the question is not always an outright “Yes”, but it is not an outright “No” either. How developers elaborate on their response tells us important things about where their values lie, what they care about and how they might be constrained in building the products that speak to those values and concerns. For instance, in a conversation with a company that builds fridge cameras, I asked this question. The fridge camera they built takes pictures of the content of the fridge each time a fridge door is opened and sends it to its owner in real-time through their app. The owner is, then, able to keep track of what is in her fridge at all times, plan meals accordingly and ideally, reduce food waste, the developer explained it to me. So, obviously, the company and the developer[s] were concerned about the growing problem of food waste in the UK and the world, but also the mental work required to keep track of the contents of our fridge, in our ever-busier daily lives. But would the answer to these two concerns and values need to be more surveillance inducing cameras that we put in our fridges? Would having a picture of the contents of our fridge each time somebody opens its door really solve our problem of food waste or the worries [and the mental work] of what to cook for dinner?

Here, the capability approach is helpful to acquire a reflexive standpoint and walk through the opportunities and the constraints the company that develops fridge cameras have. The opportunity, at the very least, is to make an intervention to a growing problem and a market, given the increasing demand from consumers to have products designed with environmental concerns in mind. The constraint, on the other hand, might be one of technology and accessibility. Sensors that would detect the quality of food and indicate whether or not a particular item of food is good for consumption require highly complex technological infrastructures, given the variety of food items that can be stored in a fridge. Equipping fridges with these sensors would also mean that the fridge prices might be higher and the cost of purchasing the IoT technology would be much higher than the fridge camera, as it involves buying a new fridge altogether for the customer. So, the calculations of market share, cost-analysis of profits and revenues and potentially the push to be “innovative” from investors with simple and scalable solutions, might be constraining the developers. Thus, the Virtue Ethics, Capability Approach, and Care Ethics help us ground our thinking and analysis in the contexts of the developers we research, rather than engage in ethical evaluations based on consequential logic or top-down ethical principles.

In the following series of blog posts, we will provide how using this conceptual framework enables us to move away from consequentialist approaches to emergent technologies, and move beyond the imaginary of two extremes (the dark future of hyper-surveillance or the ultimate-connected future). This entails that, we are able to situate both the developers as individuals embedded in social contexts that shape, constrain or at the very least influence their decision-making and the technologies they work with (e.g. hardware, software, networks, and sensors, etc.) that limit or facilitate these decisions. We are able to consider what kind of values they identify with and what kind of virtues can be integrated into the design and development of new products; and where they think their obligations lie in adhering, tweaking or moving away from those values.

This blog is based on Values and Ethics in Innovation for Responsible Technology in Europe, July 2018 Report. Contributors of the report are Irina Shklovski, Rachel Douglas-Jones, Luca Rossi, Ester Fritsch, Obaida Hanteer, Matteo Magnani, Davide Vega D’aurelio, Annelie Berner, Monica Seyfried, Alison Powell, Funda Ustek-Spilda, Sebastián Lehuedé, Alessandro Mantelero, Maria Samatha Esposito, Marcella Sarale, Javier Ruiz, Ed Johnson-Williams, Pasquale Pellegrino and Inda Memić.